You may have heard of The Boy Scout Rule. (I hadn’t until I watched a recorded presentation by “Uncle Bob”.)

You should always leave the campground cleaner than you found it.

Applied to programming, it means we should have the ambition of always leaving the code cleaner than we found it. Sounds great, but before we set ourselves to the mission we might benefit from asking a couple of questions.

1. What is clean code?

2. When and what should we clean?

Clean Code

What is clean code? Wictionary defines it like so:

software code that is formatted correctly and in an organized manner so that another coder can easily read or modify it

That, by necessity, is a rather vague definition. The goal of being readable and modifiable to another programmer, although highly subjective, is pretty clear. But what is a correct format? What does an organised manner look like in code? Let’s see if we can make it a little more explicit.

Code Readability

The easiest way to mess up otherwise good code is to make it inconsistent. For me, it really doesn’t matter where we put brackets, if we use camelCase or PascalCase for variable names, spaces or tabs as indentations, etc. It’s when we mix the different styles that we end up with sloppy code.

Another way to make code difficult to take in is to make it compact. Just like any other type of text, code gets more readable if it contains some space.

Although the previous definition mentions nothing about it, good naming of methods, classes, variables, etc, is at the essence of readable code. Code with poorly named abstractions is not clean code.

Organized for Reusability

Formatting code is easy. Designing for readability, on the other hand, is harder. How do we organize the code so that it is easily read and modifiable?

One thing we know, is that long chunks of code is more difficult to read and harder to reuse. So, one goal should be to have small methods. But to what cost? For instance, should we refactor code like this?

SomeInterface si = (SomeInterface)someObject;

doSomething(si);

Into this?

doSomething((SomeInterface)someObject);

What about code like this?

string someString;

if (a >= b)

someString = “a is bigger or equal to b”;

else

someString = “b is bigger”;

Into this?

string someString = a >= b ? “a is bigger or equal to b” : “b is bigger”;

In my opinion, the readability gains of the above refactorings are questionable. Better than reducing the number of rows in more or less clever ways, is to focus on improving abstractions.

Extract ’til you drop

Abstractions are things with names, like methods or classes. You use them to separate the whats from the hows. They are your best weapons in the fight for readability, reusability and flexibility.

A good way to improve abstractions in existing code is to extract methods from big methods, and classes from bulky classes. You can do this until there is nothing more to extract. As Robert C. Martin puts it, extract until you drop.

That leads me to my own definition of clean code.

• It is readable.

• It is consistent.

• It consists of fine grained, reusable abstractions with helpful names.

When to clean

Changing code is always associated with risk and “cleaning” is no exception. You could:

• Introduce bugs

• Cause build breaks

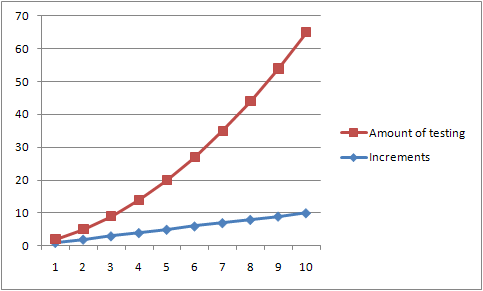

• Create merge conflicts

So, there are good reasons not to be carried away. A great enabling factor is how well your code is covered by automatic tests. Higher code coverage enables more brutal cleaning. A team with no automatic testing should be more careful, though, about what kind of cleaning they should do.

Also, to avoid annoying your fellow programmers it’s best to avoid sweeping changes that may result in check-in conflicts for others. Restrict your cleaning to the camp ground, the part of the code that you’re currently working on. Don’t venture out on forest-wide cleaning missions.

If the team does not share common coding rules, there’s a risk that you end up in “Curly Brace Wars”, or other religious software wars driven by team members’ individual preferences. This leads to inconsistency, annoyance and loss of energy. The only way to avoid it is to agree upon a common set of code rules.

With all that said, I have never imposed “cleaning” restrictions on my teams. They are allowed to do whatever refactorings they find necessary. If they are actively “cleaning” the code it means they care about quality, and that’s the way I like it. In the rare cases excessive cleaning cause problems, most teams will handle it and change their processes accordingly.

Cheers!